Continuing our exploration of how every filmmaker’s path is different, we asked Astrid Goldsmith about her journey from stop-motion animation to AR/XR/VR, the power of immersive media to tell stories, and how to adapt your narrative to different mediums.

Astrid Goldsmith

Astrid Goldsmith is a writer-director who lives in Folkestone. In 2012 she set up her own animation studio, Mock Duck Studios, specialising in puppet stop-motion, and made a string of award-winning short films. Her last stop-motion film, the BFI Network-backed Red Rover, won Best Animated Film at Sitges 2020, and was Oscar long-listed. Mock Duck’s commercial work includes the Duracell Bunny, a belligerent troll for Nike, a cyclops elephant for Ford Fiesta, a sexy worm for Trolli, and many music videos. Astrid was part of the writing team for Netflix animated series Bad Dinosaurs, and her debut graphic novel The Crystal Vase is being published by Jonathan Cape in January 2026. In 2025, she wrote and co-directed the AR project The Folkestone Elephant with Craig Gell.

Creating the Folkestone Elephant

How do you make an elephant come out of a hill? This was our first question when we started thinking about The Folkestone Elephant. The project began during a walk in the Folkestone Downs with my co-director, Craig Gell. We had always been drawn to Summerhouse Hill, with its strange conical shape and large chalk mark on one side, which locals call the Folkestone Elephant due to its vague resemblance to an elephant head. Craig started talking about making a piece of work that would bring the chalk hill figure to life, potentially by using Augmented Reality to make a 3D chalk elephant appear on your phone as you approach the hill. Neither of us had worked with AR before – I’m a stop-motion filmmaker, and Craig is primarily a sound artist – and we didn’t really know where to start.

We were in luck though, as a few months later Screen South ran the Hi3 Story Lab, a free short course for creators with immersive media projects. Craig and I developed our half-baked elephant idea over the course of Spring 2023, with intensive narrative sessions and immersive workshops. As we wrote the story, it grew into a multi-faceted project in the form of an elaborate hoax, which incorporated faux-archive documentary, animation, and geo-located AR, to build a whole imagined history of the elephant folklore.

By the end of the Hi3 course, we had a good overview of the immersive technologies available, and understood that for this story – experienced as a guided journey through the open countryside, with no access to power, and where the story unfolds across the walk – the only option for us was mobile AR. A big part of the experience was the physical act of walking in the elements, and feeling connected to the environment in a new way, which mobile AR allows. Craig started testing out A-Frame (an XR framework for creating web-based apps), placing virtual objects across the Folkestone Downs for us to find on walks, and we consulted with a Gaming professor at Canterbury Christ Church University, who gave us some more specific advice on the project’s tech requirements.

We applied for BFI NETWORK funding with a complete script, but we knew everything was subject to change during the app-testing phase. We deliberately built the narrative in discrete units, with each angle of the story as a separate film from a different time period (e.g., a 1930s Pathé-style newsreel, an 1980s schools geography video), so the order could be shuffled during testing if necessary, or even skipped entirely by users. We also rewrote the user pathway many times throughout the process, before landing on the interactive survey format. We learned from other XR creators that a clear ‘onboarding’ process (e.g. when your audience first opens your app, or is given the VR headset) is crucial to successful engagement, so we went through many iterations to get the tone right for the wraparound text and instructions. There was a lot of hidden extra work here that we hadn’t factored in – we invented a whole imaginary local history society. We ended up building the Folkestone Landmarks and Monuments Society website (https://flams.org.uk/) to host the app and to create the world that we were inviting the audience into. During testing, we learned that some people like to read all the app information very carefully, while others don’t want to read anything and skip right past it all, so we had to make sure that the whole experience made sense, whether you were engaging with the instructions or not.

For production, we separated the roles quite cleanly: Craig was in charge of building the AR app and the digital animation, and I was in charge of directing and editing the live-action films. I had only ever directed animation before, Craig had never animated or worked in AR before, and our producer, Charlie Philips, had never worked in fiction before, so there was a lot of beginner’s energy in this project! There were four live-action short films made in the traditional way; one 2D animated film made in Blender; and one 3D animated loop, which were all to be placed into the AR workflow.

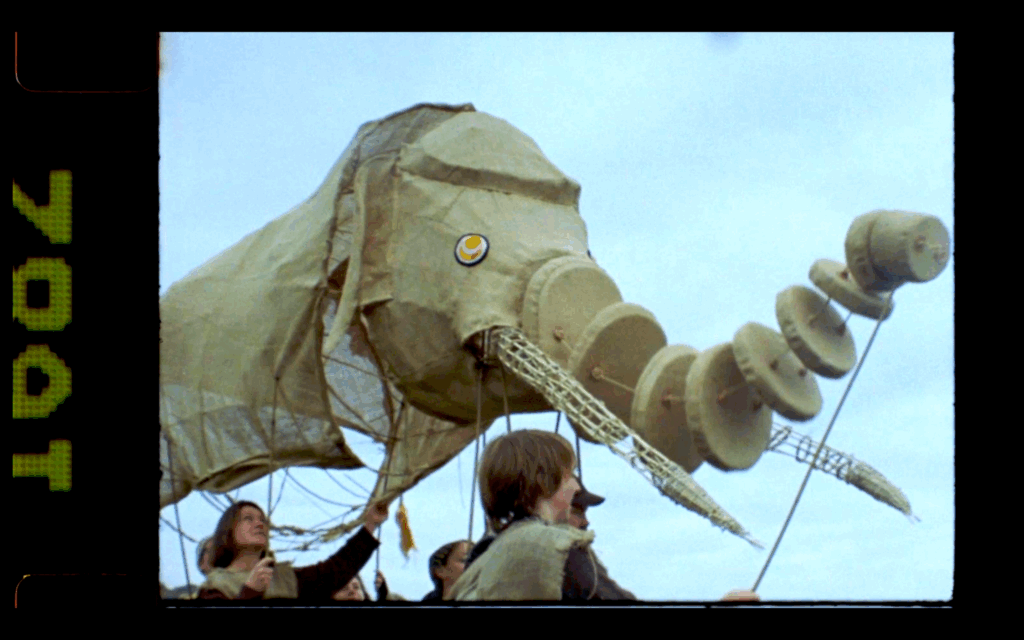

Two of the films were being shot on 16mm film, as the stock needed to be period-appropriate, so I knew we had a limited number of takes for those. I storyboarded each film, and made animatics to check they made sense in the edit. The biggest challenge was the 1970s folk parade film, which involved a giant elephant puppet, musicians, Morris dancers, and 50 extras in period costume. It was due to be filmed on Summerhouse Hill in September 2024, but three days before the shoot the MOD withdrew their permission for us to film there. So we had to spend months rearranging it and finding a new site that could double for the hill. It was November when we finally shot it, and freezing!

The 3D animated loop of the elephant ghost was also a challenge, as we wanted it to retain an analogue, real-world feel. I sculpted a small model elephant from styrofoam and Foamcoat that looked like it had been roughly hewn from chalk, and then made a 3-D scan of it using Polycam. Craig imported the 3D capture into Blender to clean up the mesh, build an armature, and add rigging points to animate the walk. The animated elephant was the trickiest asset to place in the app, as it was impossible to judge scale and location without being on-site. So there were several test walks where we’d reach Summerhouse Hill (around 30-40 minutes on foot from the nearest parking place), only to find the elephant was the size of a mouse, roaming the neighbouring field. Eventually Craig managed to nail the exact location, and now it emerges from the hill at correct scale.

We combined the app launch with an interactive Folkestone Elephant exhibition at Folkestone Museum, which drew over 10,000 visitors during its three-month run. We also got permission from the local parish council to put our poster on their public noticeboard, which is handily positioned at the start of the AR walk, so users can scan the poster’s QR code there to begin. The route follows a public footpath, so we didn’t need to seek permission, but we did highlight the access requirements at the start. The app includes a live location map with a red path to follow, but after public testing, we realised we needed to include written instructions that pop up at several points as well.

XR is a rapidly evolving field, so new immersive creators should factor this instability into their planning; even within our project’s lifespan, web-based AR has improved significantly. There’s no central hub of information about all this stuff, so if you’re considering creating immersive work for the first time, I would recommend taking advantage of any free workshops or tech support from regional film hubs and attending immersive arts festivals like The Big Thing or Electric Medway. There is also a strand of the Immersive Arts funding scheme designed for early-stage creators, which includes an element of training. If you are thinking of applying for BFI NETWORK funding, I recommend keeping your idea as simple as possible and building a large contingency into your budget! (For the BFI NETWORK Short Film Fund, you are allowed a 10% contingency in your production budget.) We found that making immersive work is almost like going through production twice, as the public testing phase throws up unforeseen problems that can be costly to solve. But more than anything, both Craig and I wish that we had known how accessible immersive technology is, and had started exploring it earlier. AR/VR technologies like A-Frame are free and easy to use (for Craig, who has a coding and web design background), and immersive storytelling is now an option for my future projects.

Have you seen the Folkestone Elephant? Directions to start the AR walk are here: https:// flams.org.uk/ar/

If you are unable to access the site physically, an alternate virtual reality version is available here: https://flams.org.uk/vr/ (desktop only)